TECH

Brain-inspired AI hardware helps autonomous devices operate efficiently and independently

The human brain constantly makes decisions. It requires minimal power to move bodies in a desired direction or avoid an object. A Purdue University engineer uses the brain's efficiency as inspiration to help autonomous vehicles, such as drones and robots, make crucial, time-sensitive decisions while operating in the field.

Kaushik Roy, the Edward G. Tiedemann, Jr. Distinguished Professor of Electrical and Computer Engineering in Purdue's Elmore Family School of Electrical and Computer Engineering and director of the Institute of Chips and AI, is developing brain-inspired hardware that enables autonomous devices to efficiently navigate and adapt to their environment. This work is published in Communications Engineering

AI-powered machines have advanced significantly over the past several decades thanks to machine learning, which enables these devices to recognize patterns and make predictions or decisions. But the algorithms that facilitate this learning require immense amounts of energy to operate due to their intensive calculations and the design of the hardware that runs them.

"Today's AI devices are designed with separate processing and memory units," Roy said. "It takes a lot of energy to move the data from the memory to the processing unit and then perform all these complex operations. This is particularly problematic for machines like drones that need to process information quickly and efficiently to avoid obstacles while completing their assigned tasks."

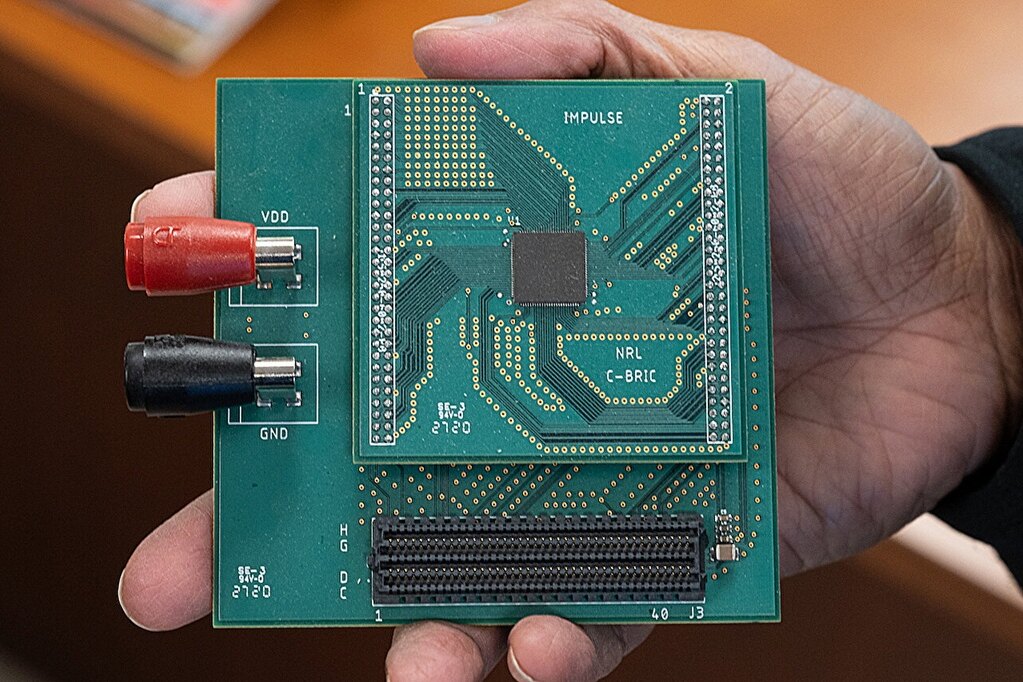

To solve this energy problem, Roy and his team in the Nanoelectronics Research Laboratory are developing a system of sensors, algorithms and hardware that allow autonomous, vision-based vehicles to move from point A to B while avoiding obstacles, optimizing energy use and operating independently.

"From the little we understand of the brain, computation and memory are not separated, essentially making it the most efficient processor imaginable," Roy said. "That's why we're taking more direct cues from the brain and co-designing hardware and algorithms that will optimize a variety of AI devices."

Purdue University engineer Kaushik Roy uses the brain's efficiency as inspiration to help autonomous, vision-based vehicles navigate their surroundings. Roy and his team are developing an energy-efficient system of sensors, algorithms and hardware that enable drones and robots to avoid obstacles. Credit: Purdue University /John UnderwoodAlgorithms power AI cognition...At the heart of this system are algorithms called spiking neural networks (SNNs). All neural networks are comprised of layers of artificial neurons that activate when presented with information, much like how a biological neuron works within the brain.

However, unlike the brain, all the neurons in a traditional neural network activate with every input of information, thereby expending large amounts of energy with every calculation and every decision or action taken by the network.

On the other hand, the individual neurons in SNNs only fire, or "spike," when they receive important information. What is deemed "important" to a particular neuron is based on an assigned membrane potential—a threshold that determines when a neuron activates.

An input or piece of data must reach that threshold for a neuron to spike and produce a reaction. Therefore, each neuron only processes and stores "memories" relevant to their function.

"The membrane potential of each neuron acts as memory, allowing the network to remember the past, much like biological neurons do," Roy said.

"This behavior turns out to be very useful for sequential and time-based tasks. These are the types of tasks that drones and other autonomous vehicles are performing as they collect information from their environment and use it to make decisions about what to do next."

While a neural network that fires selectively is a strength in terms of processing power, it introduces a weakness in training. Traditional neural networks learn from their mistakes by relying on backpropagation—a constant flow of information through the network's layers of neurons that helps figure out where and how mistakes occurred.

The selective firing of SNNs produces inconsistent activity and less information. And while the timing of a spike is critical to improving an SNN, the backpropagation in a traditional system is designed to track only where errors occur, not when.

To address these problems, Kaushik and his team have developed hybrid neural networks that combine strengths of both traditional neural networks and SNNs. This combination captures timing information effectively while remaining trainable and compact enough for autonomous devices.

Event-based cameras enhance navigation...Two such algorithms, called Spike-FlowNet and Adaptive Safety Margin Algorithm, help special event-based cameras attached to the vehicles more effectively scan and process their environment.

Much like the individual neurons in an SNN, the individual pixels in an event-based camera operate independently, and the camera only records when there's a movement or change happening in the pixels. This differs from traditional cameras, which record an entire scene—all the pixels at all times.

No comments:

Post a Comment